How to Deploy Gemma 4

Google's best open model, without the complexity.

Aryan Kargwal

PhD Candidate at PolyMTL

Published

Topic

Model Deployment

Most teams have reached a point where a chat wrapper is no longer enough. The product needs to take actions, not just answer questions. The AI layer needs to work inside a workflow, and the API bill, which was easy to justify during a proof of concept, starts to look different when the feature ships to real users.

Gemma 4 is Google's answer to what open-weight agentic AI should look like when a company actually wants to build on it. Apache 2.0 licensed, capable of multi-step reasoning and tool use, and deployable on a single GPU at production scale. The previous Gemma 3 generation was largely a research artifact. This one is built for teams who want to move AI from a chat feature into the core of their product.

What changed is not incremental. Google rebuilt the model family from the ground up, adding a Mixture-of-Experts architecture that runs at a fraction of its nominal size in active compute, a thinking mode for tasks that require genuine reasoning, and native function calling across all variants. The edge models now accept audio directly, removing an entire preprocessing layer for voice-driven applications.

This guide covers how to pick the right variant for your workload, the configuration choices that separate a stable deployment from one that drifts under load, and how Pipeshift handles the infrastructure complexity so you can focus on the product.

When to Use Gemma 4

Gemma 4 is the first open-weight family from Google that competes at frontier reasoning without requiring a cluster to run it. The 26B MoE is the primary production target for most teams. Its active parameter count per forward pass directly determines serving cost, GPU requirements, and how many concurrent requests you can handle per card.

Teams moving off managed reasoning APIs have the clearest switching case. If you're running coding assistants or document analysis on DeepSeek R1 or Qwen 3.5, Gemma 4's 31B Dense matches or beats both on reasoning benchmarks at a fraction of the managed API cost, and is self-hosted, the gap widens further. Apache 2.0 also means no licensing conversations when a customer or investor asks about your AI stack.

The economics of API consumption don't scale. Infrastructure costs do.

For output-heavy workloads, compare GPT-5.4 Nano's $1.25/M output rate against Gemma 4's $0.40/M, a gap that compounds quickly on generation-intensive tasks. Self-hosting gives you the cost structure of infrastructure rather than consumption and helps you move away from BlackBox AI instances that you cannot look inside of.

Teams building agentic pipelines are the second adoption driver. Most open models can describe what tool they would call. They'll say "I should search for X" or output something that looks like a function call if you squint at it. What they can't do reliably is produce the structured output that actually triggers an external system: a clean JSON object with the right schema, every time, without a retry loop or a post-processing script catching the malformed cases.

Before Gemma 4, getting that reliability from an open model meant building scaffolding to validate, reformat, and retry, which requires a level of AI readiness most teams are still building toward. The 31B handles this natively, outputting well-formed tool calls directly, removing that scaffolding layer and lowering the effective cost per completed task in any agent loop.

Variants of Gemma 4

Google released four models, each targeting a distinct hardware tier. The naming is worth decoding because it affects deployment decisions directly.

"E" means effective parameters, using a Per-Layer Embedding architecture that gives the edge models representational depth beyond their raw parameter count. "A" means active parameters per token in the MoE model, the 26B A4B has 25.2B total weights but activates only 3.8B per forward pass, a fraction of its parameters.

Now worth noting, the number of parameters a model activates per token does not directly determine output quality. It determines cost, speed, and how much GPU memory you need.

Where you will feel the difference is in deployment budget and in tasks that require sustained reasoning over long context, where the 31B Dense has a clear edge. If you need the model to think hard and can afford the hardware, the 31B earns it. If you need throughput at scale on a single GPU, the 26B MoE is the practical choice.

Variant | Effective Params | VRAM (Q4) | Context | Audio | Best For |

E2B | ~2.3B | ~5 GB | 128K | Yes | On-device, offline, IoT |

E4B | ~4.5B | ~6 GB | 128K | Yes | Laptops, mobile, edge agents |

26B A4B | 3.8B active | ~18 GB | 256K | No | Production API, agentic workflows, cost-sensitive deployments |

31B Dense | 30.7B | ~20 GB | 256K | No | Maximum quality, fine-tuning base, latency-predictable workloads |

For most production deployments, the decision is between the 26B MoE and the 31B Dense. The quality gap is small enough that it rarely drives the choice. What drives it is workload shape: the 26B MoE handles high-concurrency throughput workloads well, while the 31B Dense is more predictable for latency-sensitive agent calls where consistent response time matters more than raw output speed.

If you're migrating from Gemma 3, confirm two things before starting.

The

mm_token_type_idsfield is required during fine-tuning even for text-only data, its absence caused tooling failures at launch.Gemma 4 also uses standard system/user/assistant chat roles, so any custom Gemma 3 chat template needs to be replaced.

The Stack Gemma 4 Replaces

A typical multimodal production pipeline today has a vision model, a speech-to-text layer, a language model, and some detection model upstream of all of it. Each is a separate dependency, a separate failure point, and a separate cost center.

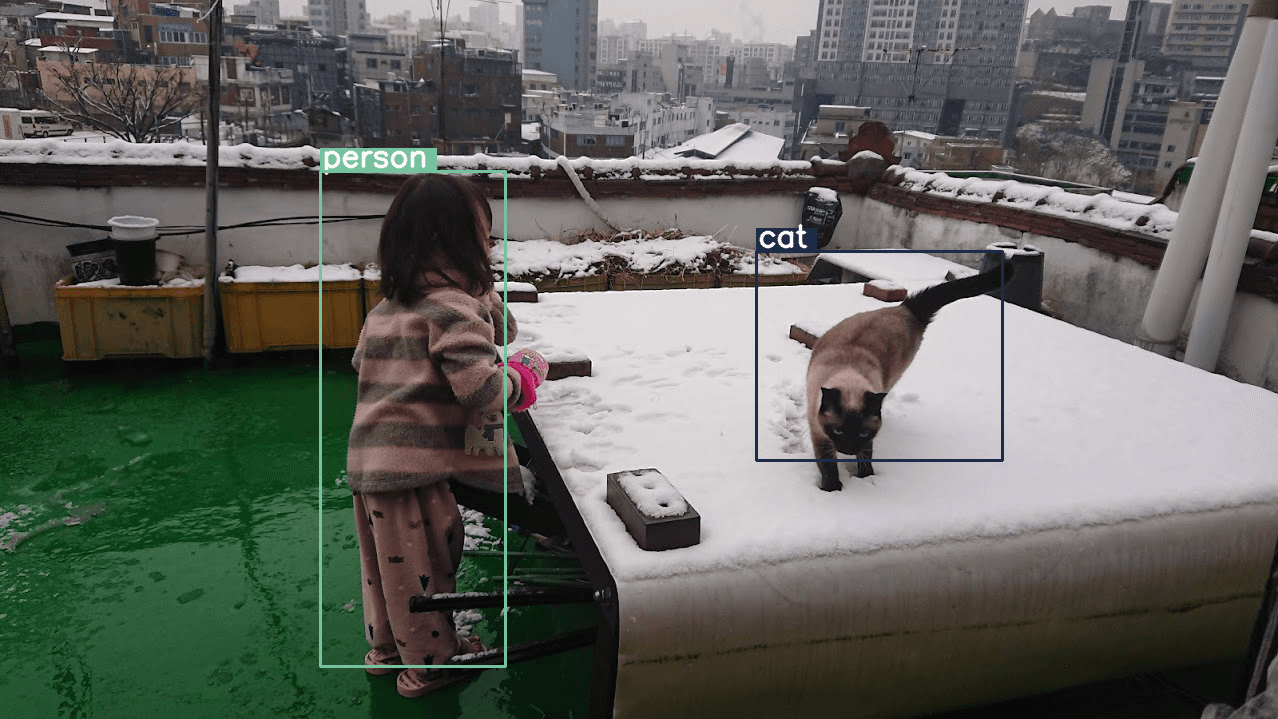

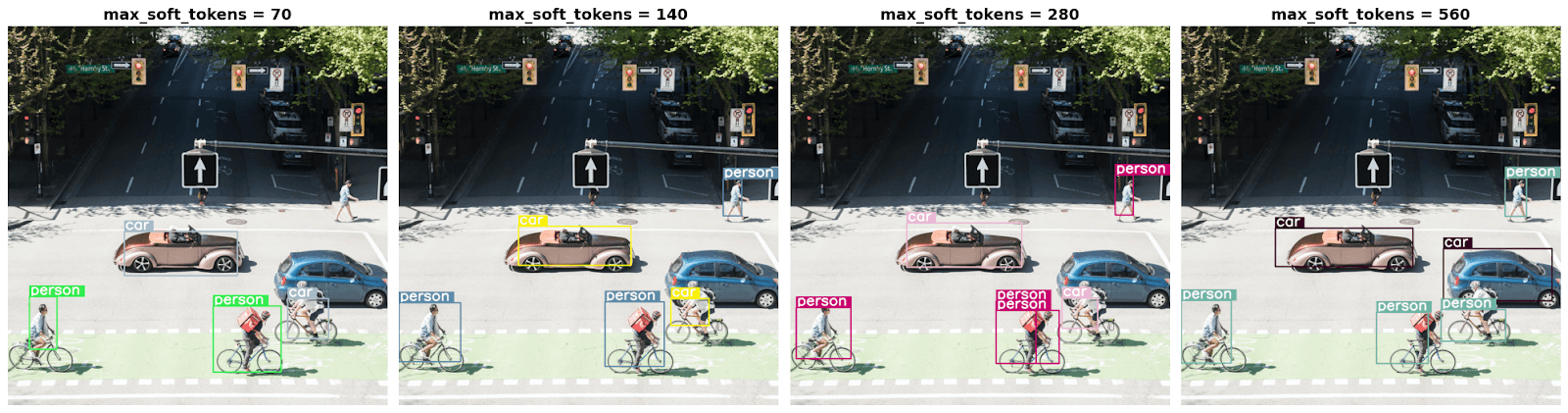

Gemma 4 handles bounding box detection, audio transcription, video understanding, and language in a single model call. The detection output is native JSON. The audio understanding is trained on speech. The vision encoder supports configurable token budgets so you are not paying full resolution cost on every frame.

None of this replaces a purpose-built pipeline at the extreme end of each capability. A dedicated segmentation model such as SAM 3 or YOLO will still beat Gemma 4 on fine-grained localization, and Whisper Large has an edge on transcription accuracy in noisy conditions.

But for teams who need good-enough across all of these without the operational overhead of four separate models, Gemma 4 is the first open-weight option that makes that trade worth taking. The Google Release has working code for each modality if you want to pressure-test the claim.

The architectural reason the memory cost stays manageable despite all of this is the shared KV cache. Later layers reuse key-value states from earlier layers rather than recomputing them, which matters most when you are running near the 256K context ceiling on the 31B.

Getting the Most Out of Gemma 4

Gemma 4 behaves consistently once it is running. The variance in cost and performance across deployments comes almost entirely from the setup around it. These four decisions are where that variance lives.

Choosing the Right Variant of Gemma 4

Most of the quality difference between precision formats at this model size is within measurement noise. What you are actually choosing is how much GPU memory the model occupies and how many requests it can handle per second as a result.

Precision | VRAM (26B MoE) | Throughput Impact | Quality Impact | When to Use |

BF16 | ~50 GB | Baseline | None | Fine-tuning, maximum quality |

FP8 | ~26 GB | +15-20% | Minimal | H100/B200 production serving |

Q4_K_M | ~18 GB | Competitive | Minor | RTX 3090/4090, single-GPU self-hosting |

Q8 | ~28 GB | Moderate | Negligible | A100 with headroom concerns |

FP8 via vLLM's --quantization fp8 is the right call for most H100 production deployments. It halves the VRAM footprint of BF16, which directly frees up memory for more concurrent sessions, and the throughput gain is real.

Q4_K_M is the practical choice for teams on 24GB consumer hardware where the alternative is multi-GPU complexity you do not need yet.

Using Context Wisely using

The 256K window is one of Gemma 4's genuine architectural improvements over Gemma 3, where long context was largely theoretical. It works now. The problem is that defaulting to it costs memory whether or not a request uses it, and most requests do not come close.

Setting --max-model-len to match your actual workload ceiling rather than the model maximum is the single highest-return configuration change for most teams. The capacity it frees goes directly to concurrent sessions.

Thinking-Mode as a Per-Task Setting

Gemma 4's thinking mode generates a full internal reasoning trace before producing the final answer. This is what drives the step-change in math and planning performance. The trace is also visible in the output, which matters for teams that need to audit or debug model decisions in production.

It costs extra tokens every time it runs. Enabling it globally means a simple greeting waits through thousands of reasoning tokens before getting a response, and the model has more surface area to hallucinate on inputs that never needed that depth in the first place. Route it only to request types where the reasoning actually changes the outcome.

Deploying Gemma 4 on Pipeshift

Most teams arrive at production with their AI stack spread across three or four providers. The language model on one, a speech layer somewhere else, an OCR model hosted separately, agents calling across network boundaries on every hop. It works until it does not.

Pipeshift is not another model host. It is one inference surface for all of it.

Your Gemma 4 deployment, your voice layer, your vision pipeline, your agent orchestration sit inside one logical cluster. There are no cross-provider hops. The routing between components is internal, which means the latency your users experience reflects actual inference time, not inference time plus whatever is happening between providers that day.

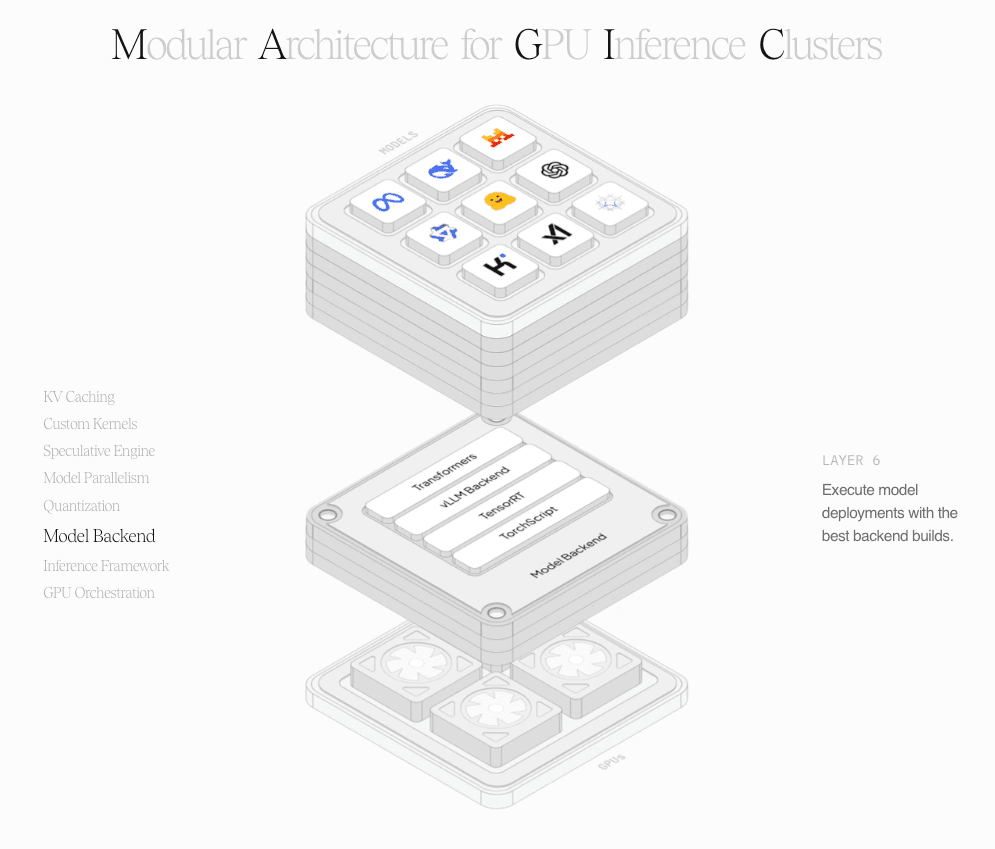

MAGIC: One Cluster, Every Engine

Underneath that single surface, MAGIC runs a heterogeneous inference cluster. Different models run on different GPU pools, different engines, different architectures, but all inside one logical boundary with no cross-provider API calls between them.

The engine decision happens at runtime, per request. A dense model like the 31B on NVIDIA hardware gets TensorRT-LLM, which has the deepest kernel-level optimization available. Similarly something like the 26B MoE would be inferred through Transformers or vLLM which provide better MoE support.

MAGIC reads the model architecture and the hardware at runtime and selects accordingly, and adapts when either changes.

For workloads that need to go deeper, custom containers drop into the cluster with their own GPU allocation, warm pool, and scaling policy, without touching anything else running alongside them.